In This Article

- 01 Why I wrote this now

- 02 Where are you right now

- 03 What does 'agentic' actually mean

- 04 Stop thinking in tools. Start thinking in systems.

- 05 The knowledge base is the real commercial asset

- 06 The Signals Layer

- 07 Strategy is the missing layer in most AI deployments

- 08 Execution - the use case library

- 09 Emma (Our Marketing Agent)

- 10 Memory and observability - the compounding loop

- 11 The tooling landscape

- 12 What this won’t fix

- 13 Who owns what as you scale

- 14 Trust, security, and compliance

- 15 Build for now and the future

- 16 How to actually start

- 17 Why this matters right now

- 18 APPENDICES

01 Why I wrote this now

I wrote this article because I'm tired of AI posts that tell you what's possible without telling you how to build it.

You know the kind of post I mean. It opens with a robot image, spends 2,000 words re-explaining large language models, and ends with a vague conclusion about how companies should “embrace innovation.” It sounds smart, but it does not help an operator build anything real.

That’s not what this is.

Everything here comes from building. At Succession, we've been on the forefront of using AI in go-to-market. We use AI every single day and for context have used over 10 billion tokens in the last few months. We've built internal tools to make our jobs faster and free external tools we release to the community. But where the real shift happened is when we started building agents that do real work inside our organization.

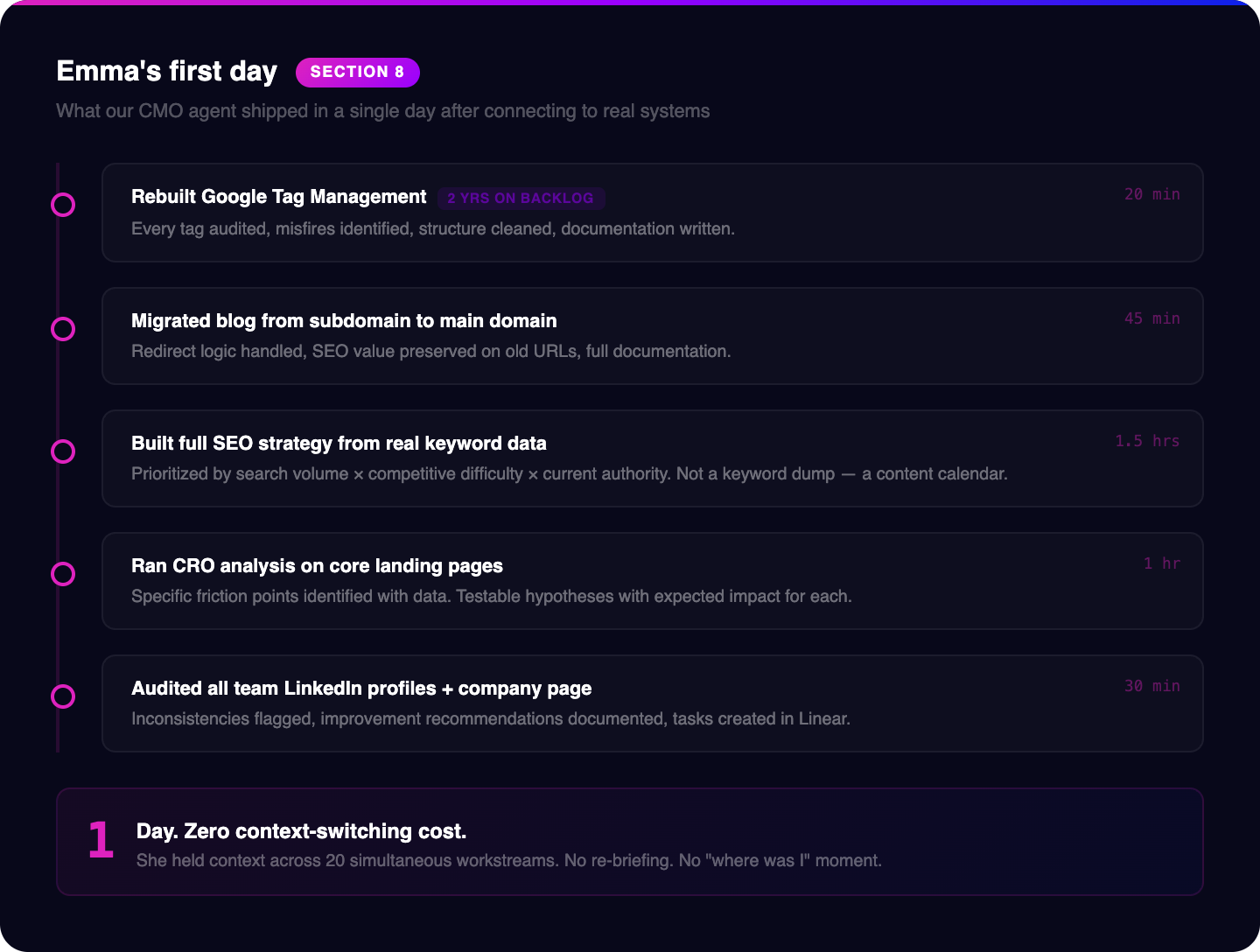

Emma is our CMO agent. She runs our marketing operations. She handles SEO strategy, content pipeline, CRO, campaign execution, analytics. She doesn't sleep. She doesn't forget context between Monday and Thursday. Neo is our support agent. He handles troubleshooting, bug fixes, and general technical support for the team, work that used to bottleneck through me. We're deploying more agents across the business, each one scoped to a specific function with access to the systems and context it needs to operate. What you're reading is what we've learned building them.

I should be clear about something. I am not an engineer. I don't write production code. I'm a commercial operator who got curious about what these tools could do, started building, and learned by running things and breaking them. Everything in this article was built by someone with domain expertise and persistence, not a computer science degree. If you're reading this thinking "this sounds technical and I'm not technical," you're exactly who I wrote it for.

Succession has worked with over 100 life science companies on commercial execution. Everything from instrument makers, reagent companies, CROs, CDMOs, diagnostics, software, and lab automation businesses. We've written the outbound messaging, built the targeting, and run the campaigns. That body of work, thousands of campaigns, millions of messages, tens of thousands of prospect replies, is the dataset behind everything in this article. When I describe what works in cold outreach to a VP of Quality at a mid-size CDMO, it's because we've sent that message and measured the result. When I describe how a knowledge base should be structured, it's because we've built and rebuilt ours across dozens of client engagements. This is not a framework invented in a strategy offsite. It's pattern recognition from doing the work.

The core claim in this article is: AI is not just making commercial teams faster. It is restructuring how revenue work gets done.

Sales and marketing are merging into a single AI-managed revenue function. Not philosophically, but structurally. The old structure, where marketing generates leads, sales follows up, RevOps cleans up the mess, and nobody shares a common system of memory, is starting to break down. What replaces it is a more continuous operating model. One where knowledge is centralized, signals are monitored constantly, actions are triggered automatically, performance is logged, and the system improves with every cycle.

Companies that see this clearly and build toward it now will have a structural advantage that compounds. The unit economics of their commercial function will improve every quarter as the system learns. The companies that wait will still be debating whether to invest in AI when their competitors have already built a two-year head start into their cost structure.

This article is for commercial leaders and founders at life science companies. Instrument makers. Reagent companies. CROs, CDMOs, software vendors, lab automation companies. If you sell to scientists, clinicians, or biopharma operators, this is for you.

Here is what we are going to cover:

- Where most teams actually are today

- What “agentic” really means and what it does not

- The architecture behind an AI-managed revenue function

- Why knowledge bases and signals matter more than most teams realize

- What useful execution workflows look like in practice

- What this will not fix

- How to start without overcomplicating it

My goal is not to help you sound informed about AI.

My goal is to help you build.

By the end of this, you’ll have everything you need to get started. The real question is, will you do anything about it? Or will you continue to believe AI is hype and it’s not ready?

Let's start with where you actually are.

02 Where are you right now

I tend to come across a couple of specific archetypes of AI users. There are those who used the free version of ChatGPT a year ago and said it’s overhyped and not useful and they haven’t touched it since. There are those who use it daily as a chat agent but are still on the free or low-tier plans that don’t have any reasoning or context on your company which means you have to be a master prompt engineer to get any meaningful output.

There are others who are deeply excited by the technology but get overwhelmed by how much is going on so they get decision paralysis and do nothing. So they know what’s possible and can talk about it with people, but they’ve never actually put any of that knowledge to work. They see a silly output from AI that goes viral on IG and just assume this is what AI isn’t ready for prime time.

Then there are those who are AI-pilled. They’re on X (Twitter) every day, reading what’s new and what’s possible and immediately implementing what they read. They’re constantly tinkering and experimenting and are genuinely curious people who want to push the boundaries of what’s possible.

Before you can build toward anything, you need an honest read on your starting point. Most commercial leaders I talk to overestimate their current state by one or two levels. That gap costs real money, because they're trying to implement Level D solutions on top of Level B infrastructure and wondering why it's not working.

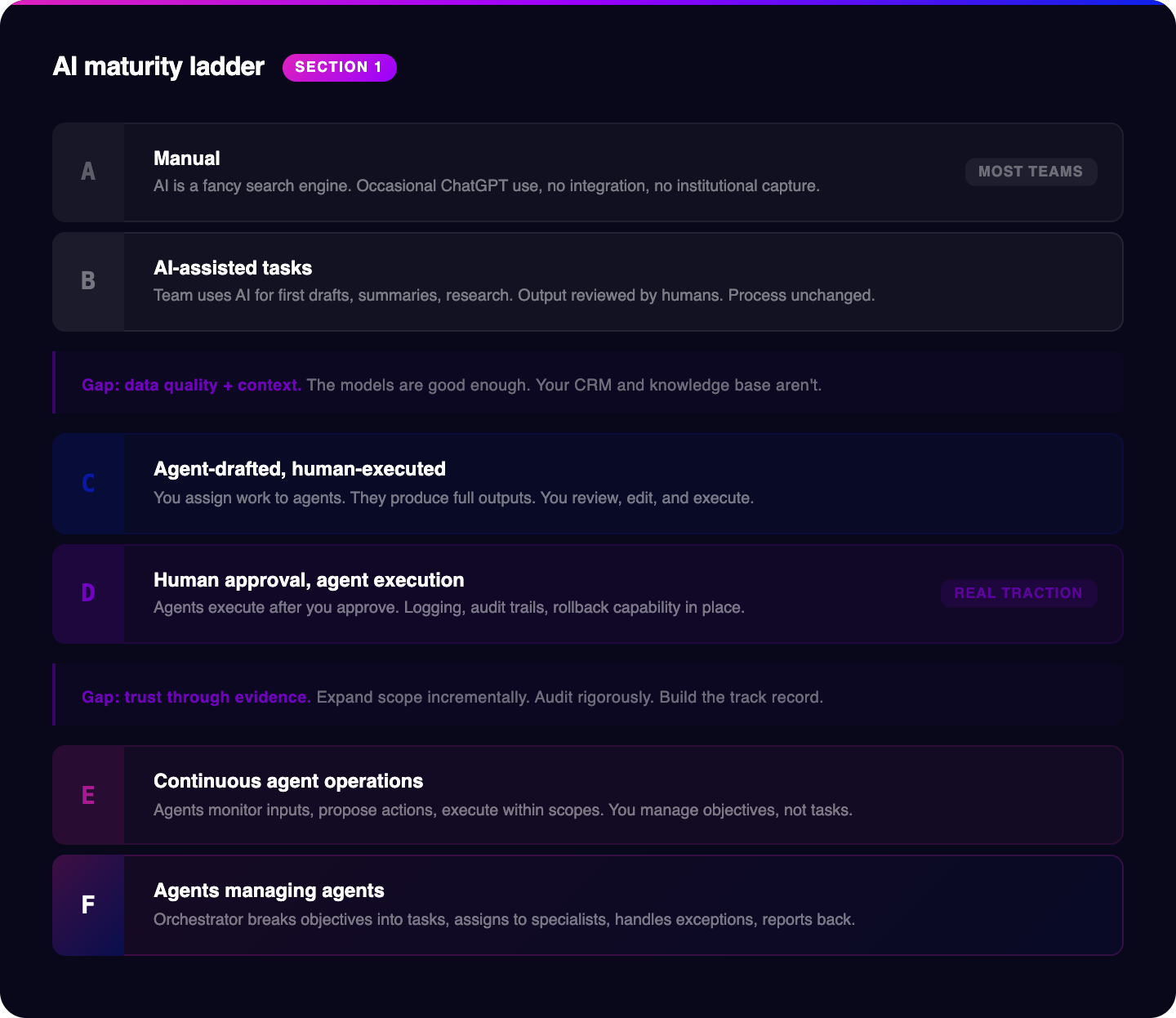

Here are the six levels. Be honest about which one describes your operation today.

Level A: Manual

AI is a fancy search engine. Someone on your team uses ChatGPT occasionally to draft an email or summarize a document. There's no systematic use, no integration with your actual stack, no shared prompts, no institutional knowledge being captured. If the person who uses it most leaves, nothing changes for the organization. Most commercial orgs in life sciences were here 18 months ago. A surprising number are still here.

What this feels like:

everyone works in isolation and you still have to do all the work.

Biggest weakness:

nothing compounds.

Next move:

document your commercial fundamentals and pick one recurring task to improve.

Level B: AI-assisted tasks

This is where most people reading this article are sitting right now. Your team uses AI tools to accelerate discrete tasks: first drafts of emails and content, research summaries, call note cleanup, competitive brief generation. The output is reviewed and executed by a human. AI is making individual contributors faster, but it's not changing how your commercial system works. The process is the same. The person is just slightly faster. This is better than nothing. It's not the structural shift.

What this feels like:

people work a bit faster.

Biggest weakness:

the operating model has not changed.

Next move:

connect one real data source and deploy one repeatable workflow.

Level C: Agent-drafted, human-executed

You've started assigning work to agents rather than using them as autocomplete. You write a brief, the agent produces a full outreach sequence, a content plan, a research report. You review it, edit it, and execute it yourself. The agent is a contractor, not an employee. You still hold every decision and execute every action. The quality ceiling here depends almost entirely on how good your prompting and your knowledge base are. Most people who think they're at Level C are actually at Level B with better prompts.

What this feels like:

the agent behaves like a contractor.

Biggest weakness:

every action still waits on a human.

Next move:

move one low-risk workflow from draft-only to approved execution.

Level D: Human approval, agent execution

This is where real traction starts. The agent doesn't just draft work, it executes work after you approve it. The agent sends the email. The agent updates the CRM record. The agent triggers the next workflow step. Critically, you have logging, audit trails, and rollback capability. You can see exactly what the agent did and undo it if it was wrong. This requires actual infrastructure: integrations, permissions models, observability tooling. Most life science commercial teams aren't here yet. The ones who are feel the difference immediately.

What this feels like:

real leverage where AI feels like an employee.

Biggest weakness:

most teams discover their data and governance gaps at this stage.

Next move:

add observability, permissions, and tighter workflow design.

Level E: Continuous agent operations

Agents aren't waiting for you to assign tasks. They're monitoring defined inputs, proposing actions within defined parameters, and executing within defined scopes. You review outcomes. An agent is watching your target account list for hiring signals and proactively doing outreach. Another is monitoring deal velocity and flagging accounts that have gone quiet. Another is running A/B tests on your outbound sequences and reporting weekly on what's working. Another is monitoring SEO keywords and ranking, and drafting new articles. Another is monitoring web traffic and bounce rates to optimize your landing pages. You've shifted from managing tasks to managing objectives.

What this feels like:

you manage outcomes more than tasks.

Biggest weakness:

trust and governance become central.

Next move:

expand scope carefully and build stronger feedback loops.

Level F: Agents managing agents

You set the objectives and the guardrails. The rest is a hierarchy of autonomous systems. An orchestrator agent breaks down objectives into tasks, assigns them to specialist agents, monitors execution, handles exceptions, and reports back. You're reviewing performance dashboards and adjusting strategy, not individual actions. This is not science fiction. A small number of commercial operations are running at this level today. They are building a compounding advantage that will be very difficult to close in two years.

What this feels like:

a true operating system.

Biggest weakness:

poor governance or weak architecture becomes very expensive.

Next move:

refine orchestration, approvals, and measurement.

Most life science commercial organizations are at Level A or B. A small number are at Level C. Very few are at Level D or beyond.

The gap between B and D is not a technology problem. The models are good enough. The tooling exists. The gap is data quality, context, and governance. Agents need clean, structured, accessible data to act on. They need defined permissions to act within. They need logging infrastructure so you can see and trust what they're doing. If your CRM is a mess and your content is scattered across six platforms, your agents will be confused and your outputs will reflect that.

The gap between D and F is a trust problem. Not trust in the technology philosophically, trust built through demonstrated reliability in your specific environment. The path from D to F is cumulative evidence that the system works, edge cases are handled, and decision quality is consistent enough to warrant broader delegation. You build that evidence intentionally, by expanding scope incrementally and auditing rigorously.

What to do now

- Figure out honestly where you are. Then ask one more question: what is the next level that would create real value for us in the next 90 days?

- That is the only level you need to care about right now.

Take the quick assessment below (it’s only a few questions and you’ll know exactly where you stand)

03 What does 'agentic' actually mean

The word "agentic" is getting used to describe everything from a GPT wrapper to a fully autonomous pipeline. That range of meaning is a problem, because it means people are comparing completely different things and drawing completely wrong conclusions about what's possible.

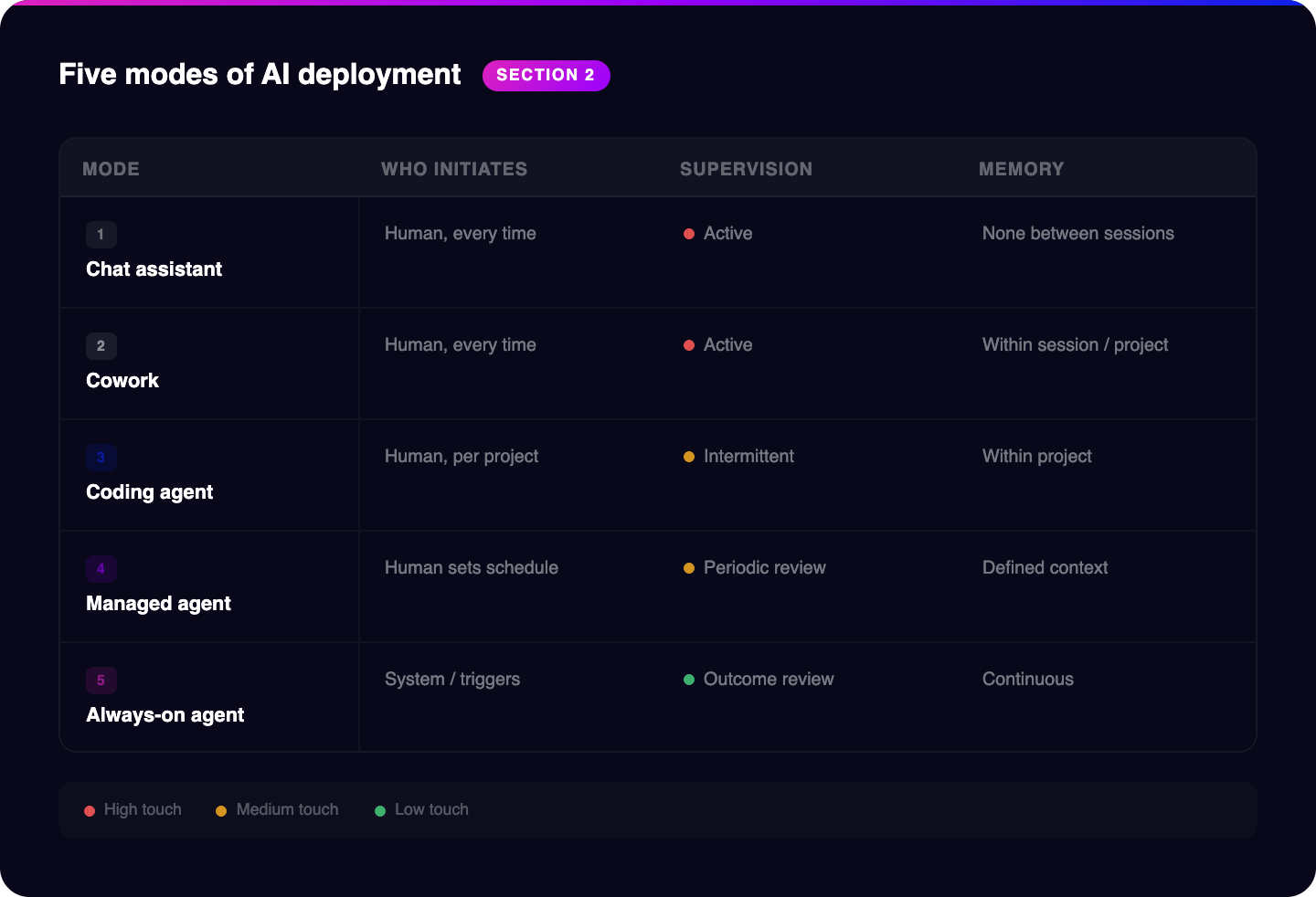

There are five distinct modes of AI deployment. They're not a spectrum where more is always better. They're different tools with different use cases and different infrastructure requirements. Let me walk through each of them.

The first mode is the chat assistant, and it's what most people mean when they say they're "using AI." A single session, you do everything, the model helps you think and draft. It's genuinely useful for one-offs: summarizing a document, drafting an email, researching a company before a call. The ceiling is that it's you-dependent. You have to start it, direct it, execute the output. It doesn't learn from session to session. It has no memory of what you worked on last Tuesday. It's a very good tool. It is not a system.

The second mode is what I'd call cowork. This is multi-step knowledge work in a shared workspace, closer to a persistent working environment than a chat window. Tools like Notion AI or a configured Claude Project start to look like this. The agent can hold context across a longer task, pull from a knowledge base you've built, and work through a problem in multiple steps. The key constraint: you still have to start each task. It requires you to show up, frame the work, and check the output. Cowork becomes genuinely useful when you've invested in the knowledge base and the integrations. Without them, it's a nicer chat interface.

The third mode is coding agents. Claude Code, Codex, and their equivalents. This is worth calling out separately because it changes something specific and important for commercial teams: the cost of building internal tools collapses. "We should build a tool that automatically pulls our CRM data and formats it for competitive reporting" used to be a two-week engineering project. With a coding agent, it's a day of iteration. This matters because it means commercial operators can build the integrations and automations they actually need without waiting in an engineering queue.

The fourth mode is managed agents. Long-running, asynchronous work without constant supervision. You define the task, set the parameters, point the agent at the right data sources, and come back to the output. Overnight account enrichment. Continuous monitoring of a signal feed. Scheduled content generation. The agent runs without you watching. This is where the productivity math starts to change fundamentally.

The fifth mode is always-on agents. Persistent, proactive, continuous memory. The agent isn't waiting for a task. It's watching, processing, updating, and acting within its defined scope all the time. Emma, our marketing agent at Succession, runs in this mode. She's monitoring signals, generating content, testing and adjusting outreach, and maintaining a live picture of our target account landscape while I'm doing other things.

Here's the one distinction that separates everything else: tools wait to be used. Agents do work while you sleep.

That's the structural change. Tools amplify human effort. Agents replace effort. The minute an agent can decide what to do next, execute it, observe the result, and adjust without waiting for a human prompt, you've crossed into a different kind of commercial operation.

| Mode | Who initiates | Supervision needed | Memory | Example use case |

|---|---|---|---|---|

| Chat / Assistant | Human, every time | Active | None between sessions | Drafting a one-off email |

| Cowork | Human, every time | Active | Within session / project | Building a content brief |

| Coding Agent | Human, per project | Intermittent | Within project | Building a CRM integration |

| Managed Agent | Human, sets schedule | Periodic review | Defined context | Overnight account enrichment |

| Always-on Agent | System / triggers | Outcome review | Continuous | Emma, revenue monitoring |

From my observations, the life sciences as a sector generally lags the broader commercial AI adoption curve by 12 to 24 months. Partly regulatory caution. Partly the complexity of the buyer. Partly a culture that are scientist first and commercial second. That lag, right now, is an asymmetric first-mover opportunity. The companies that get to Level D and E infrastructure in the next 12 months are going to look very different from their competitors by the time those competitors start trying to catch up.

What to do now

- List the AI workflows you use today and label each one: chat assistant, cowork, coding agent, managed agent, or always-on agent.

- Pick one workflow that should move from chat to cowork or from cowork to managed-agent territory.

- Pick the one where the stakes are low but the effort is high

04 Stop thinking in tools. Start thinking in systems.

Stop thinking about AI as a collection of tools. Start thinking about it as a layered operating system for your commercial function.

Every serious agentic revenue operation I've seen, across our own work and our clients', resolves into the same structural layers. Each layer has a specific job. Each layer depends on the one below it. Skip a layer and you end up with something that demos impressively and breaks under real-world pressure. Imagine ripping a page out of your SOP in the lab and expecting it to still work. The architecture isn't optional once you're past Level C. It's the difference between a system and a collection of expensive experiments.

There are eight layers. Here's how they work and where they break.

Layer 1: Data and Tools

This is your foundation, and it is almost certainly your biggest problem. CRM data, marketing automation records, analytics, call recordings, product usage data, documents, ad performance, campaign results. IQVIA reported in 2025 that 36% of life science organizations say their data is insufficient to support AI initiatives. I believe that number is conservative, because most organizations don't know what "sufficient" actually means until they try to do something specific with the data.

Agents amplify what's in front of them. If your CRM has incomplete contact records, inconsistent stage definitions, and activity logs that reps fill out reluctantly with minimum required information, your agents will work with exactly that. Fix the data before you build the agents.

AI can also help fix these data gaps and integrations for you. But this has to be where you start. Unsexy, but a messy foundation doesn't get cleaner when you put AI on top of it. It gets messier, faster.

What breaks without it:

unreliable output, shallow recommendations, incorrect actions.

What good looks like:

a small set of trusted systems with usable, current, accessible data.

Layer 2: Connectivity (MCP)

Model Context Protocol is the USB-C of the AI stack. Before MCP, connecting an AI agent to a new data source or external system meant writing a custom integration. Then writing another one for the next system. And another. The N-by-M problem: N agents, M systems, N times M custom connectors, each one a maintenance burden. MCP standardizes how agents connect to external systems, so a connector built once works across any compliant model.

Companies that invest in MCP-compatible connectivity today are building composable, durable stacks. Every new agent you deploy can use the connectors that already exist. Companies that skip this layer have a tangle of brittle custom integrations that breaks every time a vendor pushes an API update or you switch models. It's technical debt that compounds. Many of the tools your using probably already have MCPs built that you can leverage immediately.

What breaks without it:

manual handoffs, duplicated effort, fragile workflows.

What good looks like:

agents can reliably retrieve context and take defined actions in the systems that matter.

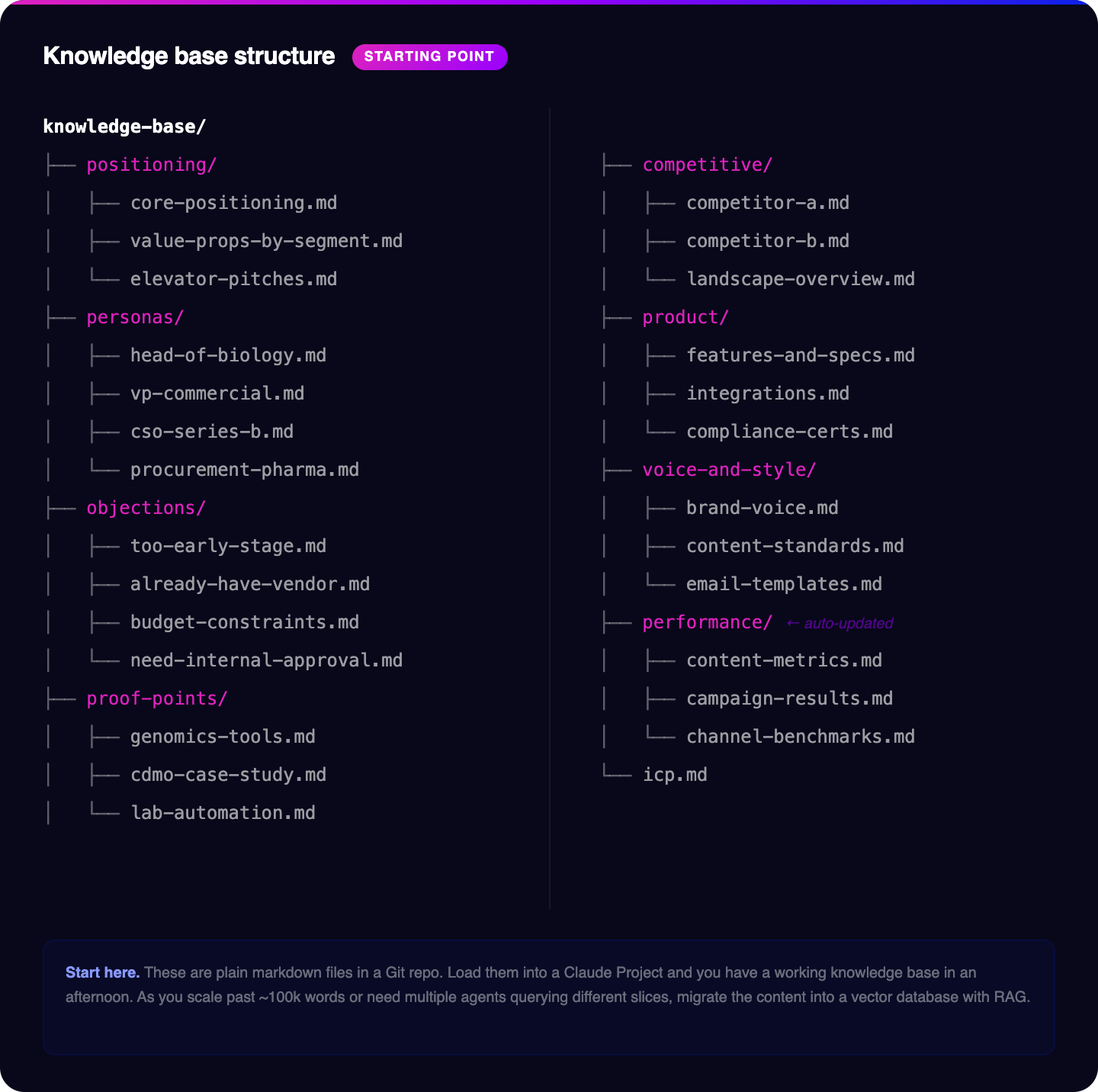

Layer 3: Knowledge Base

Not the Google Drive folder or SharePoint where content and decks go to die. A properly built knowledge base is a living, queryable, versioned operating system of organizational truth. It's the single most important input to every agent in your stack.

Without a good knowledge base, your agents are generic. They'll produce outputs that sound like a well-informed outsider with no specific knowledge of your business, your customers, or your competitive position. With a properly built knowledge base, your agents are grounded in your specific reality. They know your positioning, your personas, your proof points, your objections, your competitive differentiation. The quality ceiling of every agent in your stack is set by the quality of your knowledge base. I'll go deep on this in Section 4.

What breaks without it:

generic output, vague messaging, inconsistent reasoning.

What good looks like:

a living, structured, queryable body of commercial truth.

Layer 4: Signals and Intelligence

Raw data doesn't drive commercial decisions. Signals do. Layer 4 is the translation layer that takes raw inputs from Layer 1, normalizes them, scores them, and surfaces intelligence that agents and humans can act on. Intent data, deal velocity changes, engagement decay patterns, objection frequencies, stage readiness scores, competitive mention spikes. This layer is what makes your commercial operation proactive rather than reactive.

Without Layer 4, you're running on lagging indicators. You find out a deal is at risk when the prospect stops responding. You find out a competitor is gaining ground when you lose a deal. Layer 4 tells you before that happens. Section 5 covers the full signal universe, because this layer is where life science commercial teams have the most untapped data and the least built infrastructure.

What breaks without it:

you always discover important changes too late.

What good looks like:

the system detects changes that matter and routes them into planning or action.

Layer 5: Strategy Council

This is where the architecture gets interesting. Layer 5 is not where agents execute work. It's where agents produce plans. Multiple models reason over the same grounded context from your knowledge base and signal layer, debate tradeoffs, and produce a strategy with a confidence estimate and a defined approval path. One model might prioritize speed to conversion. Another might flag risk to relationship. The output isn't a single recommendation, it's a reasoned plan that a human can evaluate and approve before anything happens.

This is where humans stay in the loop the longest, and intentionally so. Strategy errors compound when execution becomes autonomous. A bad campaign strategy executed by fast agents does more damage than the same bad strategy executed by a slow human team. The investment in Layer 5 is what prevents your agentic system from being fast in the wrong direction.

What breaks without it:

the system gets fast in the wrong direction.

What good looks like:

recommendations include tradeoffs, confidence, and approval paths.

Layer 6: Execution Agents

These are the specialists that actually ship work. Channel agents handle email, LinkedIn, content, and ads. Sales agents manage sequence execution, follow-up timing, and CRM updates. Content agents write content in your brand’s voice and analyze what’s actually working. Ops agents handle data enrichment, list management, and reporting. Each execution agent is scoped narrowly and given access to exactly the tools and data it needs for its specific function, and no more.

The failure mode here is scope creep. An execution agent that has access to more than it needs creates compliance risk and unpredictable behavior. In life sciences specifically, tight scoping isn't optional. Define what each execution agent can touch, log everything it does, and build human review gates wherever the stakes are high enough to warrant them.

What breaks without it:

you have insights but no leverage.

What good looks like:

narrowly scoped workflows that operate reliably inside defined boundaries.

Layer 7: Observability and Memory

Every action an agent takes produces a signal. Layer 7 captures those signals and feeds them back into the system. Which subject lines drove opens? Which follow-up sequences converted to meetings? Which objection handling approaches worked for which personas? Layer 7 is what turns a collection of agents into a learning system. Without it, you're running the same campaign logic over and over and wondering why results plateau.

The compounding loop is real. Each cycle of action, observation, and update makes the next cycle smarter. Organizations that build observability infrastructure early accumulate a performance advantage that accelerates over time. It's also the layer that makes your agents auditable, which matters enormously.

What breaks without it:

no learning loop, weak trust, impossible debugging.

What good looks like:

every important action is logged, measurable, reviewable, and reusable.

Layer 8: Trust, Governance, and Permissions

What can each agent read? What can it change? What requires human approval? What gets logged and for how long? Layer 8 is the governance layer, and it's not an afterthought you build after the system is live. It's something you design from the beginning. The EU AI Act is fully applicable as of August 2, 2026. If you're building agentic systems that touch EU data or EU markets, you need to be designing for it now. That means documented decision logic, human oversight mechanisms for high-risk decisions, and explainability for consequential outputs.

What breaks without it:

avoidable risk, stalled adoption, fragile trust.

What good looks like:

clear permissions, explicit approvals, documented oversight, auditable action.

What to do now

- Map your current setup against the 8 layers: data, connectivity, knowledge base, signals, strategy, execution, observability, governance.

- Start with your knowledge base. This is something you can build quickly without needing complete data overhauls and will give you the biggest leap in what AI can do for you.

05 The knowledge base is the real commercial asset

Most life science companies already have valuable commercial knowledge. The problem is not that it does not exist. The problem is that it is scattered, contradictory, outdated, and unusable.

Some of it lives in slide decks. Some in a founder’s head. Some in Slack threads. Some in call notes. Some in old messaging docs. Some in one-pagers nobody remembers. Some in a folder structure that made sense two years ago and does not now.

That is not a knowledge base.

That is storage.

A knowledge base built for agentic commercial operations is not a filing cabinet. It is a living system that agents can query in real time to make grounded decisions and produce specific output. Every agent in your stack, from the one drafting an outbound email to the one producing a deal strategy, pulls from it constantly. The quality of that pull determines whether your agent outputs sound like you or sound like a well-meaning intern who read your website.

Here is what belongs in it.

Positioning

You need one opinionated articulation of what you do, who it is for, what problem it solves, what makes you different, and why the right buyer should care.

If your positioning is vague, your agents will be vague.

Weak positioning statement:

- “We help life science companies accelerate innovation through our comprehensive platform.”

Useful positioning document:

- “We help growth-stage life science commercial teams generate more qualified meetings by combining life science-specific targeting, message strategy, and AI-powered execution. We are chosen over generalist lead-gen agencies when scientific nuance and GTM speed both matter.”

Agents cannot rescue weak specificity.

ICP definition

Not “biotech and pharma companies.” Specific characteristics.

What kinds of companies actually fit? What sizes? What stages? What scientific or commercial environments? What disqualifies them? When do you usually win? When do you consistently lose?

A good ICP document makes exclusion explicit, not just inclusion.

Persona library

Not marketing avatars. Signal maps.

What does this buyer care about at this stage? What language do they use when they are interested? What objections signal real concern versus polite stalling? What proof points move them? What makes them tune out?

A Head of Biology at a Series B biotech is not the same buyer as a Senior Scientist at a a pharma company, even if both appear in “buyer persona” slides.

Objection library

Every team encounters the same objections over and over and then treats them as if they are new every time. Most teams have less than 10 real objections that cover 90% of cases.

A good objection library maps:

- The objection itself

- The segment where it appears

- The stage where it appears

- What response has worked

- What evidence supports that response

This turns team memory into reusable system memory.

Proof points

Specific results. Dates. Deal sizes. Industries. Use cases. Scientific buyers in life sciences are trained to interrogate evidence. They read the methodology section. They check the sample size. They ask who did the study. "Our clients see great results" is not a proof point. It's a placeholder for a proof point that someone meant to fill in.

"A genomics tools company similar to yours reduced time-to-qualified-meeting by 40% in Q1 2025 by replacing their manual qualification process with our automated scoring layer" is a proof point.

Agents using it in outreach, content, or deal conversations will produce outputs that actually move scientifically-trained buyers. Agents using vague claims will produce outputs that get dismissed in 30 seconds by people who review scientific papers for fun.

Specificity changes the quality of everything downstream.

Competitive intelligence

Live, not static. A battlecard that was accurate when your competitor had a different product and a different pricing model is not competitive intelligence. It's a historical document. What changed about your top three competitors last month? What did they announce at the last major conference? What are their customers saying in public forums? What has their sales team been pitching recently, based on what your reps are hearing in deals? An agent querying your competitive intel layer should get information that's current. Building that requires a monitoring process and an update discipline, but it's entirely achievable with a managed agent watching the right sources. A static battlecard that lives in a PDF on OneDrive is not the same thing.

Content performance history

Which messages drove clicks? Which formats drove conversions? Which angles drove responses from which personas? In which segments and at which stages? This is the feedback layer that makes agent-generated content progressively better over time. Without it, your content agents are producing outputs optimized against general writing quality rather than your specific audience's demonstrated preferences. With it, they're iterating toward what actually works in your market with your buyers. The gap between those two is the gap between content that's competent and content that compounds.

One more thing worth saying clearly: life science companies have a structural advantage in building knowledge bases that most commercial operators don't. The regulated content culture in pharma, biotech, and medical devices, with its controlled document systems, version histories, review trails, and mandatory update cycles, maps almost perfectly onto the rigor a good knowledge base requires. The compliance discipline that felt like drag when you were trying to move fast is exactly the muscle you need here. Build on it. The companies in less regulated industries are trying to create that discipline from scratch.

The key is not to build the Sistine Chapel on day one. Get the basics in place, be thorough, but this knowledge base needs consistent love and attention to ensure all your outputs are grounded in truth.

A practical principle

Your knowledge base should answer this question clearly:

If a smart new hire joined tomorrow, could they understand how we sell, who we sell to, what wins, and what not to say without having to chase five people?

If the answer is no, your agents will struggle too

Where and how to build it

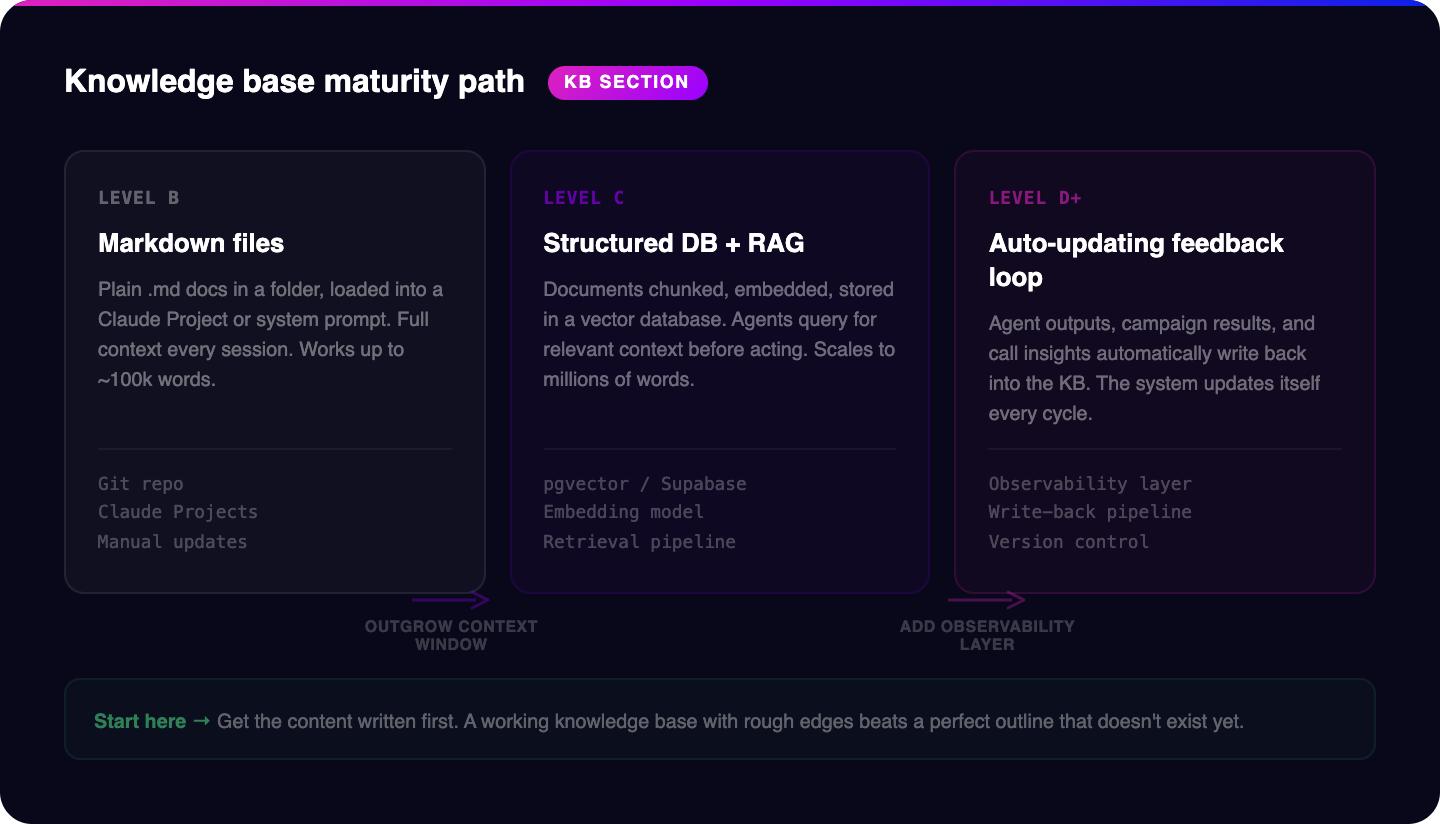

The simplest starting point is markdown files. Plain .md documents in a folder structure. Just text files organized by category:

Markdown is human-readable, version-controllable with Git, and directly consumable by every major AI model. If you load a well-organized folder of markdown files into a Claude Project or a system prompt, you have a working knowledge base in an afternoon. For most teams at Level B or C, this is the right starting point. Don't overthink the tooling. Get the content written first.

As your knowledge base grows beyond what fits in a single context window, or as you need agents to query specific sections dynamically rather than loading everything at once, you'll need a retrieval layer. This is where a database enters the picture.

The architecture that most production agentic systems use is called Retrieval-Augmented Generation, or RAG. Instead of giving your agent every document every time, you give it a librarian that pulls only what's relevant. The agent gets exactly the context it needs, nothing more, nothing less.

The practical implementation has three components. First, a document processing layer that takes your markdown files, PDFs, call transcripts, and other source material, splits them into chunks of a few hundred words each, and converts those chunks into vector embeddings using an embedding model. Second, a vector database that stores those embeddings and enables fast similarity search. Third, a retrieval step in your agent workflow that queries the database before the agent generates any output, so every response is grounded in your specific organizational knowledge.

The maturity path looks like this. At Level B, your knowledge base is markdown files loaded into a Claude Project or system prompt. Every agent session gets the full context. This works until your knowledge base exceeds roughly 100,000 words or until you have more than three or four agents that each need different slices of the knowledge base.

At Level C, you move to a structured database with RAG. Your documents are chunked, embedded, and stored in a vector database. Agents query for relevant context before acting. This scales to millions of words and supports dozens of agents pulling from the same source of truth.

At Level D and beyond, you add a feedback layer. Agent outputs, campaign results, and call transcript insights are automatically processed and written back into the knowledge base. The system updates itself. New objection patterns get logged. New proof points get added. Content performance data refines the knowledge base without a human manually updating a file.

One architectural decision worth getting right early: separate your knowledge base content from your operational data. Your knowledge base is your positioning, personas, proof points, and competitive intelligence. Your operational data is your CRM records, campaign metrics, and deal history. They live in different systems and serve different purposes. Your knowledge base grounds agent reasoning. Your operational data provides the specific context for a specific task. An agent drafting outreach to a genomics company should pull the genomics persona and relevant proof points from the knowledge base, and pull the specific account's deal history and recent signals from the CRM. Mixing these into a single store creates retrieval problems where the agent gets CRM records when it needed positioning guidance, or vice versa.

The key principle is the same regardless of where you are on this path: the knowledge base is a living system, not a completed project. Schedule a monthly review. Assign ownership. Treat it like you would a product, something that gets better every sprint, not a document that gets written once and forgotten. The quality of every agent in your stack is capped by the quality of what it has to draw from.

What to do now

- Build the first version of your knowledge base this week.

- Start with just three docs: ICP, positioning, and objections/proof points.

- Remove outdated or conflicting docs before loading anything into an agent workflow.

06 The Signals Layer

The richest intelligence source in your commercial operation is almost certainly your call recordings. Not because call recordings are uniquely valuable in isolation, but because AI is exceptionally good at synthesizing unstructured conversational data at scale, and almost no life science commercial team has built the translation layer that turns those conversations into organizational intelligence.

Think about what actually happens in a typical sales conversation. A prospect mentions they've been evaluating a competitor and had a concern about implementation complexity. They reference a recent FDA guidance that's affecting their timeline. They use a specific phrase about their data integrity problem that signals exactly where they are in their buying journey. The rep hears all of this. Some of it goes into a CRM note, usually a truncated summary written by someone already mentally moving to the next call. Most of it evaporates. The competitive mention disappears. The regulatory context disappears. The exact language the prospect used to describe their problem, the language that should be showing up in your outbound messaging, disappears.

In a mature signal stack, that same call is transcribed automatically, analyzed for topics, objections, competitor mentions, and sentiment, mapped into a shared schema, and surfaced to the relevant layers within hours. The competitive mention triggers an update to the competitive intel layer. The regulatory language gets flagged for messaging review. The objection gets logged against the segment and stage. The call notes become input into a system that learns from every conversation.

That's just one of the broken translation layers that almost every commercial team has. Here's how to fix it by building out the full signal universe.

Internal signals

Your call recordings are the most underused asset in this category. Transcription is table stakes. The value is in topic detection, structured extraction of objections and next steps, sentiment mapping, and comparison across accounts and segments. Gong and Zoom do some of this. Custom extraction layers can go further, particularly for life sciences-specific topics like regulatory timelines, validation requirements, and compliance concerns that generic models don't surface well.

CRM activity patterns are the second major internal signal source. Velocity changes, stage drift, engagement decay, response time trends. A deal that was moving at a consistent pace and has slowed in the last two weeks is telling you something. A contact who used to reply within a day and is now taking three days is telling you something. These patterns exist in your CRM right now. Almost no commercial team has a systematic process for surfacing them in real time. An agent monitoring these patterns and flagging accounts that show risk signatures is not a complex build. It's a managed agent with access to your CRM activity data and a clear alerting schema.

Product usage data matters enormously if you sell software or digital tools. Feature adoption, login frequency, usage depth, and usage decay are all leading indicators. A customer who was logging in daily and has dropped to twice a week is showing you a churn signal weeks before they say anything. A customer who's using one feature heavily but hasn't discovered a second feature that's directly relevant to their stated goals is showing you an expansion opportunity. Usage data as a signal source is one of the most underused inputs in life science commercial operations.

Email and marketing engagement rounds out the internal picture. Click patterns, open rates by segment and message type, form completions, content downloads, webinar attendance. All of these are signals about what specific buyers care about right now. The problem is that most marketing automation platforms treat these as campaign metrics rather than account-level intelligence. The translation into account-level signal is a Layer 4 job.

External signals

Intent data tells you who is actively researching your category right now. Tools can capture in-market behavior across millions of B2B content sources. When a target account's employees start consuming content about lab automation, instrument validation, or clinical data management, that's a signal. The sophistication in life sciences is in knowing which intent topics actually correlate with purchase readiness in your specific segment, which requires calibration against your own conversion data.

Hiring signals are one of the most powerful and least captured signal types in life sciences commercial operations. A biotech that posts a Director of Regulatory Affairs role is operationalizing. A CDMO that's hiring three quality engineers is about to scale GMP capacity. A startup that posts its first VP of Commercial is about to build a sales function and will need the commercial infrastructure to support it. These are specific, concrete, and directly tied to buying windows. LinkedIn, Indeed, and specialized biotech job boards are all publicly accessible. Almost nobody monitors them systematically because nobody has built the monitoring layer. An always-on agent watching your target account list for hiring signals is a straightforward build with disproportionate return.

Grant funding from NIH, EU Horizon, UKRI, and equivalent bodies creates predictable budget windows, especially for academic labs and early-stage biotechs. A principal investigator who just received a five-year R01 grant for genomics research has budget to spend on tools and consumables. The grant database is public. The award amounts are public. The research focus is public. If you sell into academic research, not monitoring NIH Reporter and equivalent sources is leaving real pipeline on the table.

Clinical trial updates are tier-one signals for anyone who sells into clinical operations. ClinicalTrials.gov is a public feed of IND filings, phase transitions, enrollment updates, and completion reports. A company moving from Phase II to Phase III needs a fundamentally different commercial infrastructure. They're hiring clinical operations staff, scaling their data management, evaluating new CROs, and making significant purchasing decisions. A company initiating a Phase I trial is at a much earlier stage with different needs. If you sell anything that touches clinical operations, cell and gene therapy manufacturing, clinical data management, patient recruitment, site management, this signal source should be feeding your prospecting logic continuously.

FDA warning letters are one of the most direct signals in life sciences commercial work, and one of the least monitored. If you sell quality management software, validation services, or compliance training, a warning letter to a target account is a direct opening. The account has a documented problem. They are under regulatory pressure to fix it. They are almost certainly evaluating solutions. This is a public record. FDA publishes warning letters on its website. A managed agent monitoring that feed and cross-referencing it against your target account list and ICP criteria is not complex to build. The question is why you're not doing it.

Drug pipeline updates are strategic context for understanding what a biopharma or biotech company's commercial situation looks like. PDUFA dates, pipeline expansions, Phase III failures, FDA approval decisions. A company that just received approval for a first commercial product has a completely different budget picture and infrastructure need than they did in development. A company that just lost a Phase III after years of investment is under cost pressure and possibly restructuring. Understanding pipeline status for your target accounts is free intelligence that almost no commercial team systematically tracks.

Press releases and public announcements tell you what a company is prioritizing at the organizational level. Partnership announcements signal strategic direction. Facility openings signal investment in manufacturing or operational capacity. Leadership hires signal where a company is placing strategic bets. The language in press releases is chosen carefully. Reading it literally and extracting the priority signals is a job an agent can do continuously across hundreds of target accounts simultaneously.

Fundraising rounds give you a precise read on who is about to hire, what they'll buy, and on what timeline. A Series B biotech with a cell therapy focus just raised $80 million. You can map that to hiring plans, infrastructure investments, and technology purchasing windows with reasonable confidence based on comparable companies at the same stage with the same focus. Crunchbase, PitchBook, and public press coverage all surface this data. The value is in the systematic processing, not the data itself.

10Ks and earnings calls are free market intelligence that almost no commercial team in life sciences reads systematically. Revenue breakdown by segment. R&D investment priorities. Competitive positioning language from leadership. Risk factors that signal operational constraints. If your target accounts are public companies, you have a detailed quarterly window into their priorities, pressures, and investment thesis. The information is dense, which is exactly why an agent that can synthesize a 150-page 10K into relevant commercial intelligence in minutes is valuable.

LinkedIn activity from executives at target accounts is a real-time signal about priority shifts. A CSO who starts engaging heavily with posts about AI in drug discovery is signaling a strategic interest before it shows up in any procurement process. A CFO posting about manufacturing cost optimization is telling you something about where their pressure is. A VP of Operations attending a regulatory technology webinar and posting about it is telling you exactly what to talk to them about. This is not surveillance. It's reading publicly available professional signals the way good salespeople have always read context, just at scale and with structure.

What to do now

- Pick 3 to 5 signals you actually want to operationalize.

- Start with the highest-value ones: call recordings, CRM activity, hiring signals, funding/company changes, and website or inbound engagement.

- Define what action each signal should trigger: monitor, route, research, outreach, or escalate.

07 Strategy is the missing layer in most AI deployments

Most teams jump straight from data to output.

They connect systems, generate recommendations, draft content, or trigger outreach, but they never build the layer where the system actually reasons through tradeoffs.

That is a mistake.

The strategy layer is what makes an AI-managed revenue function defensible. It is where multiple inputs become a plan, not just an action.

Council mode is how this works in practice. You run multiple agents in parallel over the same knowledge base, the same signals, the same current objectives. Each agent reasons independently. The output isn't a piece of content. It's a plan: with tradeoffs called out, confidence estimates attached, and a defined approval path before anything executes. You get a recommendation, not a result. You decide whether the recommendation becomes action.

Think of it like having three senior advisors who each read the same briefing document and then give you their independent read on the situation before you decide what to do. The difference is that your agent council never argues about credit, never brings personal stakes to the recommendation, and documents its reasoning in full every time. You can see exactly why each agent reached its conclusion, which means you can audit the logic before you act on it.

Example

A target account:

- Just hired a VP Commercial

- Raised a significant round last month

- Has three high-intent visits to pricing and case-study pages

- Is already in your CRM from six months ago

A weak system jumps straight to outreach.

A better strategy layer might produce:

- Recommendation 1: re-engage previous contact with an updated business-context message

- Recommendation 2: reach out to the new commercial leader with a tailored point of view on scaling GTM infrastructure

- Recommendation 3: route both into coordinated outreach with different messaging angles

- Confidence: medium-high

- Risk note: previous deal went quiet without a clear close reason, so messaging should acknowledge change rather than assume continuity

- Approval tier: human review required before any external send

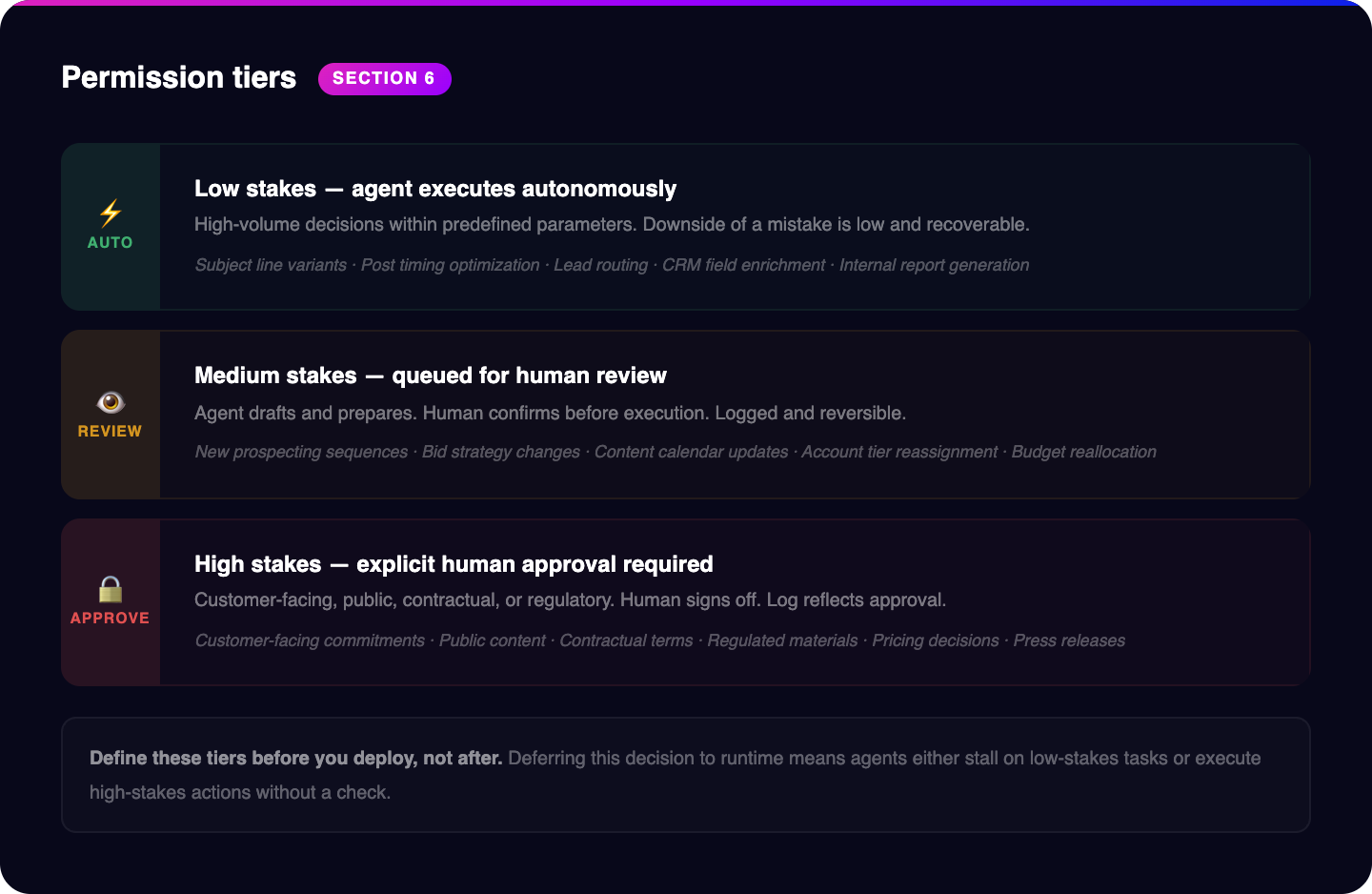

The permission structure determines what level of human involvement each type of decision requires. High-volume, low-stakes decisions execute automatically within predefined parameters. The agent writes subject line variants, posts at optimal times, routes an inbound lead to the right rep. No approval needed because the downside of a mistake is low and recoverable. Medium-stakes decisions queue for human review before execution. A new prospecting sequence targeting a specific account segment, a change to your bid strategy on a high-spend campaign. You look at it, confirm, and it runs. High-stakes decisions require explicit human approval before anything moves. Customer-facing commitments, public content, anything contractual or regulatory in nature. A human signs off. The log reflects that.

Design principle: Default to auto-execute. Escalate based on: (1) is the action externally visible, and (2) is it reversible? If externally visible AND irreversible = human approval. If externally visible but correctable = human review. If internal and correctable = auto-execute with logging.

The discipline here is defining these tiers before you deploy, not after. When you're designing the workflow, you decide what category it falls into and what the approval path looks like. If you defer that decision to runtime, you'll end up with either agents that stall waiting for approval on low-stakes tasks or agents that execute on things they shouldn't without a check. The structure has to be designed in advance.

The strategy layer is what separates an AI-enabled team from an AI-governed one. Build it before you need it.

What to do now

- Define where planning should happen before execution.

- Create 3 approval tiers: low, medium, and high stakes.

- For each key workflow, decide whether the system can execute automatically, recommend only, or require human review.

08 Execution - the use case library

Nothing in your commercial operation should be limited by human capacity anymore. That's not a vision statement. It's a design requirement. Whatever needs to happen, tell an agent. If it needs to happen regularly, set up a scheduled task. The agent runs it whether your team is in meetings, on calls, or asleep. The question isn't what you can automate. The question is what you haven't automated yet and why.

Agent Skills

Before we get into the use cases, I quickly want to share what skills are. Skills are essentially markdown (text) files that tell an agent exactly what to do in a specific situation. The best analogy is from the Matrix. When Neo needs to learn kung fu or trinity needs to learn how to fly a helicopter, they simply downloaded this knowledge and were able to execute that skill right away. This is the same for skills with agents. If you provide your agent with a skill on exactly what to do, simply given them access to that skill will enable them to use it. Want your agent to be able to do something new? Build a skill file and it will have that skill going forward. Skills also don’t need to be static one time builds. Agents can update their own skills as they learn and improve which becomes the flywheel that makes them better at a compounding rate.

The use cases below are not theoretical. Each one describes a workflow (or set of skills) you can build with current tools, current models, and infrastructure you likely have access to already. Some of them you can build in a day. Some will take a week to scope and connect properly. All of them produce real commercial output. Work through this list as an audit of your current operation. For every workflow where you'd answer "we do this manually," that's a place where a scheduled agent task should be running instead.

Marketing

SEO strategy and content pipeline

The agent connects directly to your Google Analytics instance and Search Console, pulls current performance data across all pages and queries, and runs a gap analysis between what you actually rank for and what your target accounts are searching. The output isn't a generic keyword list. It's a prioritized content calendar built on actual search volume, your current authority in adjacent topics, and the competitive difficulty of each gap. When you approve the calendar, agents draft the content aligned to your documented voice and positioning. Every published piece feeds its performance data back into the knowledge base at the end of each reporting cycle, which means the next calendar is built on real results, not assumptions. The strategy compounds because the inputs keep improving.

Most life sciences marketing teams have a guessing problem: they create content based on what feels relevant to their team rather than what their buyers are actually searching for. The agent closes that gap with data. You stop guessing and start publishing against documented demand. The difference in organic traffic compounds over 12 to 18 months.

Video and content repurposing

Record a video on Monday. By Tuesday, without a human touching a single technical step, that video is published on YouTube with an optimized title, description, and tag set built from actual search data in your niche. The workflow runs from source file to transcript to edited clip to animated version to upload with metadata. The same transcript that powers the YouTube description becomes a LinkedIn post series, a section in your next newsletter, and a blog summary with internal links to related content. You recorded it once. The distribution happened automatically. Most teams leave 80% of the value of every piece of content on the table because repurposing is always the last thing anyone gets to. An agent doesn't have a to-do list to get through first.

Competitive intelligence loop

Your agent monitors competitor websites, pricing pages, job postings, press releases, and LinkedIn activity on a continuous basis. Every Monday morning, you get a structured brief: what changed last week, what it signals about their strategy, where you now have a positioning opening they've left exposed. A competitor quietly expanded their service offering into a territory adjacent to yours. Their job postings show three new regulatory affairs hires. Their LinkedIn activity went quiet on a topic they were loud about six months ago. You see all of it before your team starts their week. No one spent time on research. No monitoring dashboards to check. The brief arrives and the team responds.

Example: Weekly competitive brief, built while you sleep

# Competitive Intelligence - Skill File

## Trigger

Run every Sunday night. Monitor the top 5 named competitors.

## Steps

1. WEBSITE CHANGES: Check each competitor's product pages, pricing

page, and careers page. Diff against last week's snapshot. Flag:

new product features, pricing changes, new messaging language,

removed pages or features.

2. JOB POSTINGS: Pull all new job postings from the last 7 days.

Categorize by function (R&D, commercial, operations, regulatory).

Flag postings that signal strategic moves: new therapeutic areas,

new geographies, new capabilities.

3. CLINICAL & REGULATORY: Check ClinicalTrials.gov for any trial

updates involving competitor products or platforms. Check FDA

databases for new clearances, approvals, or warning letters.

4. PUBLICATIONS: Search PubMed for new publications authored by

competitor R&D teams. Flag any that introduce new data on

performance, accuracy, or methodology.

5. LINKEDIN ACTIVITY: Pull recent posts from competitor executive

teams (CEO, CSO, VP Commercial). Note topic shifts, conference

appearances, partnership mentions.

6. SYNTHESIS: For each competitor, produce:

- What changed this week (facts only)

- What it likely signals (analysis)

- Positioning implications for us (recommended response)

- Update priority (high / medium / low)

7. KB UPDATE: If any finding changes our competitive positioning,

draft an update to the relevant battlecard in the knowledge

base. Flag for human review before committing.

## Output format

Monday morning brief. One page per competitor. Lead with the

highest-priority finding across all five.

## Constraints

- Separate facts from interpretation clearly.

- Never speculate on competitor financials without a public source.

- Flag when a data source was unavailable or returned errors.

A sample Monday brief entry might read:

"[Competitor A] - HIGH PRIORITY.- Added 'AI-Powered Analysis' messaging to their platform product page on Thursday. Previously positioned as manual workflow tool.

- Three new job postings: ML Engineer, Data Scientist, and Head of AI Product. Their CSO published a LinkedIn post about 'the future of AI in assay development' that received 3x their normal engagement.

- Signal: they're pivoting toward AI capabilities and will likely announce a product update at the SLAS conference in May. Positioning implication: our AI capabilities are mature and production-tested with 100+ clients. If they're announcing a v1, we should lean into our track record and case study depth in outbound targeting their current customers.

- Recommended: update battlecard to add their AI messaging language and draft a comparison one-pager before SLAS.

- Priority: high."

Without this workflow, someone on the team would need to manually check five competitor websites, scroll through job boards, search PubMed, and monitor LinkedIn every week. In practice, that means it happens inconsistently or not at all, and competitive shifts get noticed months late. The agent does it every Sunday night and the brief is waiting when the team opens Slack on Monday morning.

Lifecycle marketing

The agent segments your email list by actual behavior, not demographic fields. Contacts who opened the last three sends but never clicked. Contacts who haven't opened in 60 days. Contacts who clicked the pricing page three separate times but never booked a call. Each segment gets variant copy drafted by the agent, grounded in what you know about where they are in the buying process and what's moved similar contacts in the past. You approve the variants. They send. The results feed back into the knowledge base. The next campaign isn't a reset. It's a refinement built on what the last one revealed.

The reason most lifecycle programs underperform is not bad copy. It's that everyone on the list gets the same message at the same time regardless of what they've actually done. Behavioral segmentation is the fix, and most teams don't implement it consistently because it requires someone to maintain the logic as the list grows and behavior patterns shift. The agent maintains it automatically. The segmentation stays current because the agent checks it on every send cycle, not just when someone remembers to update the rules.

CRO and conversion optimization

The agent pulls session recording data, heatmaps, and conversion funnel metrics and runs a structured analysis of where your visitors are dropping. Not general impressions. Specific findings: the fold where 60% of visitors stop scrolling, the CTA button that gets scrolled past without a click on mobile, the form that has an 80% abandonment rate specifically at field three. For each friction point, the agent proposes a testable hypothesis with a specific change. You approve the test, it runs, and the outcome gets documented. You're not guessing what's broken on your site. You're running a continuous improvement process with data behind every decision.

Paid ads optimization

The agent monitors campaign performance across every channel you run, identifies ad sets where cost-per-click is trending up or conversion rate is trending down, and proposes specific changes: new copy variants, adjusted targeting parameters, budget reallocation between campaigns. Every proposal is grounded in what's worked historically in your knowledge base, not a generic best-practice recommendation. You approve the change. It implements. Results are tracked and logged. Your ad spend gets more efficient over time without requiring a dedicated analyst to watch dashboards every morning.

Event strategy and ROI

Before most teams decide which conferences to attend, the agent has already pulled historical lead data from every event you've run, calculated cost-per-qualified-meeting by event, identified the ICP match rate at each conference based on attendee profiles and exhibitor lists, and built a ranked event calendar for the year. After each event, it logs the leads generated, the meetings booked, and the eventual pipeline attributed to that event. Next year's event budget decision is built on actual return data. You stop going to events out of habit and start going to the ones that produce deals.

Inbound lead follow-up

Speed to lead is one of the most consistent predictors of conversion rate in B2B sales, and most teams are hours or days late on it because first response is manual and humans have other things to do. An agent is never late. The agent detects a new form fill the moment it hits your system, researches the company and contact in real time using public signals and CRM history, and drafts a personalized first response that references what they were looking at and what companies similar to theirs have done with you. It sends within minutes. The prospect doesn't feel like they submitted a form into a void. They feel like a person picked up the phone.

The research on this is consistent: contact rates drop sharply with every minute that passes after a form fill. By the time a human notices a new lead, looks them up, decides what to write, and sends something, the window for a warm first conversation is often already closing. An agent running this workflow changes the dynamic entirely. Your response arrives while the prospect is still thinking about why they filled out the form.

Converting web traffic into leads

Most of the companies visiting your website never fill out a form. Intent data providers can identify which accounts are browsing your key pages even when they're anonymous. The agent monitors that anonymous traffic, identifies accounts hitting your pricing page, product pages, or case study library, cross-references each account against your ICP criteria and existing CRM records (are they already in your pipeline? already a customer?), and triggers targeted outreach sequences for high-fit visitors who haven't converted. Accounts that were invisible become pipeline. You're not waiting for them to raise their hand.

Sales

Account research workflow

Before every call, the agent compiles a structured brief: complete CRM history of prior interactions with this account, key highlights from any recorded calls you've had with this contact or others at the company, recent news and company signals from the last 30 days, LinkedIn activity from the specific contact you're talking to, and the two or three proof points from your knowledge base most relevant to their situation. The brief arrives before the call starts. Your rep walks in with full context. There is no "let me pull that up" moment during the call, no scrambling through a CRM while the prospect is talking. Every call starts from an informed position.

The time cost of manual pre-call research is real and cumulative. A rep doing five calls a day and spending 20 minutes preparing for each one is spending over an hour and a half daily on research that produces inconsistent results depending on how much they remembered to look up. The agent does the same research in seconds, covers more ground, and produces a consistent format every time. Your reps spend that hour and a half on calls instead.

Here's a simplified version of the account research skill file that powers our pre-call briefing workflow. This is the actual instruction set an agent receives:

# Account Research Brief - Skill File

## Trigger

Run before any scheduled call. Pull the calendar event, extract the company name and contact name.

## Steps

1. CRM LOOKUP: Query CRM for all records matching the company. Pull: deal stage, last activity date, all logged interactions in the last 90 days, any open opportunities, assigned rep notes.

2. CONTACT RESEARCH: For the specific contact, pull: LinkedIn headline and recent posts (last 30 days), role tenure, previous companies, any mutual connections with our team.

3. COMPANY SIGNALS: Check last 30 days for: press releases, funding announcements, clinical trial updates (ClinicalTrials.gov), job postings in relevant functions, leadership changes.

4. KNOWLEDGE BASE MATCH: Based on company profile (size, stage, therapeutic area, technology), retrieve: the 2-3 most relevant proof points, the persona-matched objection handling, any relevant case studies.

5. OUTPUT FORMAT:

- Company snapshot (3-4 sentences: what they do, stage, recent momentum)

- Relationship history (prior touches, outcomes, open items)

- Recent signals (what changed in the last 30 days)

- Suggested talking points (2-3, tied to their specific situation)

- Relevant proof points (with source reference)

- Open questions to explore

## Constraints

- Never fabricate proof points. Only reference verified entries from the knowledge base.

- Flag if CRM data is stale (no activity logged in 60+ days).

- Keep the brief under 500 words. Reps won't read more than that before a call.

The output arrives in the rep's Slack channel 30 minutes before the call. A brief for a Series B cell therapy company might look like:

"Vertex Therapeutics: Series B ($45M, closed March 2026). Developing allogeneic CAR-T platform for solid tumors. Recently posted Director of Process Development and two Associate Scientists on LinkedIn, suggesting manufacturing scale-up. Phase I IND filing expected Q3 based on their latest press release. Your last interaction was a 15-minute discovery call on Feb 12 where Dr. Chen raised concerns about data integrity in their current LIMS system. She specifically mentioned audit trail gaps during their last FDA pre-IND meeting. Relevant proof point: [Genomics Co case study] reduced LIMS-related FDA findings by 60% in 4 months. Open question: Has their pre-IND meeting happened? If so, what was the feedback on their data systems?"

That took the agent about 90 seconds to compile. It would have taken a rep 20-30 minutes to do the same research manually, and they probably would have missed the job postings and the clinical trial timing.

Automatic CRM enrichment

Raw contact and company records in most CRMs are incomplete from the day they're created. Someone entered a name and email address. The firmographic data, funding stage, technology stack, recent news, and hiring patterns weren't filled in because no one had time. The agent runs an enrichment pass overnight on a schedule, filling in every field it can source, flagging records where it found conflicting information, and surfacing records where the enrichment data suggests the account has recently changed in ways that affect your ICP scoring. What used to take a team several days of manual research runs without anyone touching it. Your CRM reflects reality instead of the moment someone created the record.

1:1 prospecting at scale

This is not mail-merge with a first name. The agent pulls your target account list, researches each company individually using live signals, and drafts outreach for each contact that's grounded in what's actually happening at that account right now. A recent executive hire. A clinical trial that just moved to Phase III. A press release about an expansion into a new indication. A funding round that closed last month. The message sounds like someone did their homework because an agent did. You either automate this or review the drafts, approve the ones that are ready, and queue the rest for revision. Your first touches have context that most cold outreach never has.

Signal-to-multioutreach

A trigger event fires: an FDA warning letter hits a competitor's client. A target account announces a Series B. A new VP of Supply Chain joins at a company you've been watching. The agent detects the trigger, identifies the relevant contacts at the account, drafts coordinated outreach across email and LinkedIn grounded in the specific event and your positioning relative to it, and triggers the outreach to occur. The window between a trigger event and your outreach is minutes, not days. The right message lands at the right moment because you built a system that watches for those moments.

Example: FDA warning letter to first touch in under an hour

This is the workflow that best illustrates what "agentic" actually means in practice. No human initiates it. The agent detects a trigger, reasons about it, and drafts coordinated outreach, all before anyone on the team knows the signal exists.

# Signal-to-Outreach - Skill File

## Trigger

Continuous monitoring. Check FDA warning letter feed daily.

Cross-reference each new letter against target account list and

ICP criteria.

## When a match is found:

1. SIGNAL ANALYSIS: Read the warning letter. Extract: the specific

citations (21 CFR references), the product/facility involved,

the nature of the deficiency (data integrity, CGMP, laboratory

controls, etc.), and the response deadline.

2. ACCOUNT CONTEXT: Pull CRM history for this company. Have we

engaged before? Who did we talk to? What was the outcome? Pull

org chart: who runs quality, who runs regulatory, who runs

the facility cited.

3. KNOWLEDGE BASE MATCH: Based on the deficiency type, retrieve:

relevant case studies where we helped remediate similar issues,

specific proof points tied to the citation category, persona-

matched messaging for quality/regulatory buyers under pressure.

4. FIND CONTACTS: Based on the personas in the knowledge base:

- Search our CRM for relevant contacts at the company

- Search our lead database to find new conatacts don't have in the CRM

- Score and rank based on fit and priority

- Choose the top 3 best scoring leads and provide your reasoning

4. DRAFT OUTREACH: Write separate messages for each lead:

- Email: reference the specific deficiency type (not the warning

letter directly - lead with their problem), position

relevant capability, include proof point

- LinkedIn: shorter, more consultative, suggest a resource

- Use our message writing skill and follow all instructions

5. ROUTING: Queue drafts for human review. Flag urgency level

based on FDA response deadline.

## Constraints

- Only draft outreach if we have a relevant case study or proof

point. No generic capability pitches.

- Run the final messaging through the messaging reviewer skill file

and iterate until everything passes

- Human approval required before any message sends.

The agent detects a warning letter issued to a mid-size CDMO for data integrity deficiencies in their quality lab. It cross-references against the target list and finds a match. It pulls the CRM history, we had an intro call 8 months ago with their VP of Operations but the deal went cold. It retrieves a case study where a similar CDMO resolved comparable data integrity gaps using our platform in under 90 days. It drafts an email to the VP of Quality that leads with the challenge of maintaining audit-ready data integrity across multiple sites, references the case study outcome, and offers a 20-minute walkthrough. It drafts a LinkedIn message to the VP of Regulatory that's shorter and more consultative.

Both drafts are in the team's review queue or you can just send these messages automatically. The window between the FDA publishing the letter and our first touch is faster than any human could do. A team doing this manually would need someone to check the FDA site, read the letter, research the company, decide whether it's relevant, look up the right contacts, and draft the outreach. That's a half-day of work if it happens at all. Most teams never see the signal in the first place.

Cold lead re-engagement

Your CRM has dormant contacts you've written off. Some of them have changed since the last touch. The agent audits those dormant records, compares them against current ICP signals. Has the company grown? Are they hiring in relevant functions? Did they close a new funding round? Is there a clinical trial update that changes the timing calculus? And identifies the contacts that now fit your current ICP better than they did when you stopped reaching out. For those contacts, the agent triggers re-engagement sequences that reference what's changed, not generic "just checking in" messages. Accounts you stopped pursuing come back into active pipeline.

If you have previous call notes, you can surface what came up in the past and share new relevant content as you release it. For instance, you release a new whitepaper or abstract, have your agent go through the CRM to see who that new data should be sent to and why. It can build that list for you and then get it out to those contacts without having to create custom filters in the CRM to figure out who should get it without good reasoning.

Trigger-based follow-up sequencing

A prospect clicked the case study link you sent. A different prospect opened the email three times but didn't click anything. A third one went cold after a warm reply two weeks ago. Each of those behaviors indicates a different thing about where that person is, and each deserves a different follow-up. The agent detects the behavior, maps it to the appropriate branch in your sequence logic, drafts the message, and sends on your schedule. No one has to track who clicked what and manually select the next step. No deals go cold because someone forgot to follow up. The sequence runs on the behavior, not on someone remembering.

Daily call prep and in-person visit briefing

For field reps visiting accounts in person, the stakes of walking in underprepared are higher than a missed call detail. The agent builds a briefing document specifically optimized for in-person visits: complete account history and relationship timeline, full contact background and LinkedIn activity, most recent signal data for the account, suggested talking points tied to the account's specific situation, and any open questions or unresolved items from the last conversation. The briefing goes to the rep's phone before they leave the office. Every rep walks in prepared, every time, regardless of how much they remembered to review beforehand.

Sales call co-pilot

During a live call, the agent sits in a side window with access to your full knowledge base. The rep encounters an objection about validation data for a specific assay type. They type a quick query. The agent surfaces the relevant case study, the specific data point, and the framing that's worked in similar conversations. The competitor's name comes up. The agent pulls the relevant comparison points from your competitive positioning library. The rep gets the technical specification they couldn't remember off the top of their head in four seconds. No "let me follow up on that" for things that should be at your fingertips. The knowledge base becomes accessible in real time, not just in prep.